What if unspoken feelings, fears, and desires could manifest as architectural elements to reflect the experiences and feelings of a community, perhaps even feeling empathy? How could architecture be a more active contributor to our social and psychological wellbeing?

Parasympathy is an interactive installation operating as an extension of visitors’ minds. The name “Parasympathy” is derived from the parasympathetic nervous system and seeks to provide a similar service: to calm and restore.

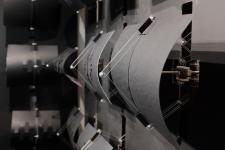

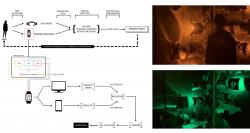

The project leveraged Artificial Intelligence as extended intelligence and relied on the human brain for information-processing, employing wearable technology and sensory environments to a process in which synapses in the brain triggered responses in the installation, ultimately modulating emotion. The employed method is unique in its use of wearable technology (i.e., Empatica E4 and Open BCI EEG) as prostheses to collect bio data and integrate AI and neuroscience for real-time emotion detection and communication with an intelligent interactive installation, synchronizing changes in the space to the emotional state of the individuals within the space.

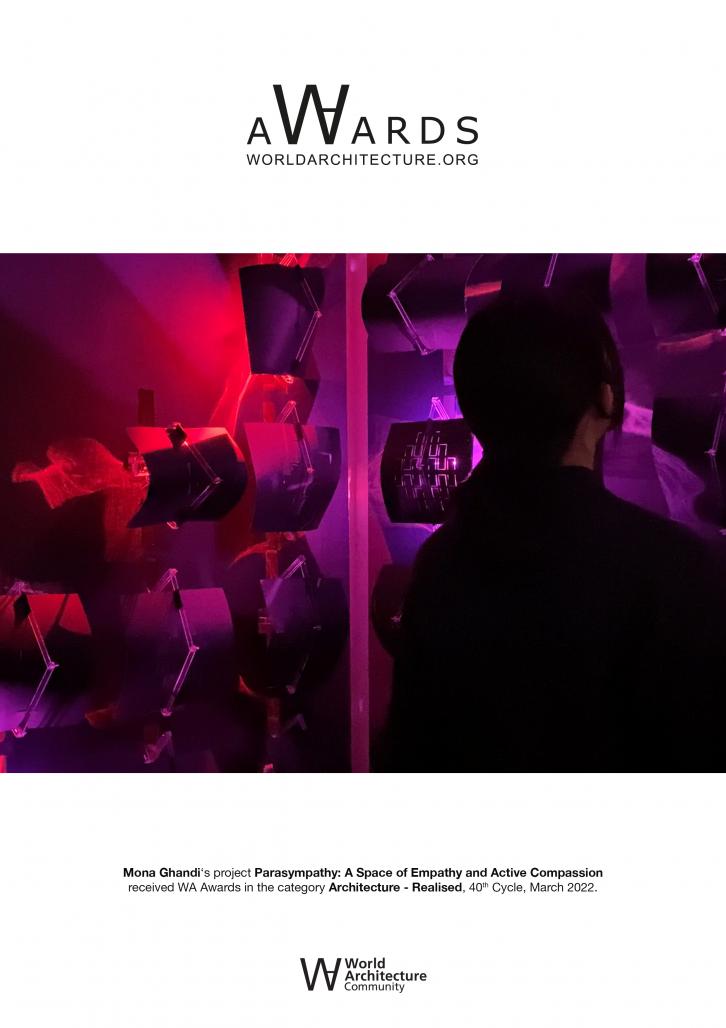

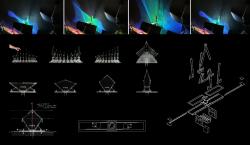

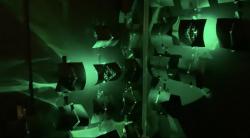

Parasympathy is made of a series of kinetic tiles folded and fluctuated in a calculated rhythm, producing a spectacle of color and patterns akin to the northern lights. The effect was contingent upon the involvement of its users; using a smart wristband, biophysical data (i.e., heart rate, skin electricity, blood volume, and temperature) was gathered and analyzed by our ML algorithm and translated into emotion categories. The installation was then calibrated in real-time to actively respond to this data by changing patterns and colors to create an ambient that would improve the users’ emotions. For example, if the stress was detected, space morphed, and colors shifted to calming bright colors such as blue. Based on earlier color studies, we assigned different colors to different emotions to navigate and index the moods of the users.

By using each individual’s biosignature as a noticeable trace, this user-centric installation is a medium that actively responds to the mood of the community, helping to promote communication for those who often go unheard. This installation demonstrates responsible uses of emerging technology that can promote social awareness and enhance the agency of the democratic populace and equitable design. It contributes to research on cyber-physical design and the interaction of technology and empathy. This project had a singular objective, to reconcile the relationship between humans and architecture and redefine it as one of emotional empathy and active compassion.

By integrating Artificial Intelligence (AI), wearable technologies, affective computing, and neuroscience this project blurs the lines between the physical, digital, and biological spheres and empowers users’ brains to solicit positive changes from their spaces based on their real-time biophysical reactions and emotions. While becoming aware of their mental state through the cell phone app developed by us, visitors found the therapeutic immersion in color and light increased their sense of self-awareness as they had a key role in activating the space upon their involvement. Users learned their emotional and physiological states and thus acquired a tool to enhance, mitigate, or simply become aware of their emotions.

2020

2020

The installation was comprised of four 4’ x 8’ panels in the corner of a gallery, drawing users to the center of the space to amplify the sense of immersion. Each panel consisted of a grid of retractable tiles that acted as the kinetic component of the installation. Nestled within each module was a colored LED light that activated in concert with the module’s movement and detected emotion. A Raspberry Pi queried the webserver to read the last predicted emotional state, calibrating the installation.

The application of this cognition-emotion-space interaction system has the potential to be utilized as a method of remedial therapy, provide augmented assistant living for people with physical and mental disabilities and elderlies ultimately empowering them to regain control over their environments and live more equal and independent lifestyles.

Link to the project video: https://www.youtube.com/watch?v=qrUnVqfXy8Y

Team Morphogenesis Lab – Washington State University

Morphogenesis Lab Director: Mona Ghandi

Design: Mona Ghandi, Mohamed Ismail

Fabrication: Mona Ghandi, Mohamad Ismail, Shanle Lin, Aisha Marcos, Ruri Adams, Jessie Lu, Marcus Blaisdell

Programming & Electrical: Marcus Blaisdell

Cinematography: Nicole Liu, Mohamed Ismail

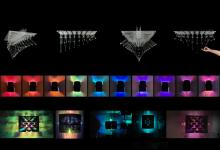

Parasympathy: A Space of Empathy and Active Compassion by Mona Ghandi in United States won the WA Award Cycle 40. Please find below the WA Award poster for this project.

Downloaded 0 times.